IoSR Blog : 23 September 2013

Reflections on the AES International Conference

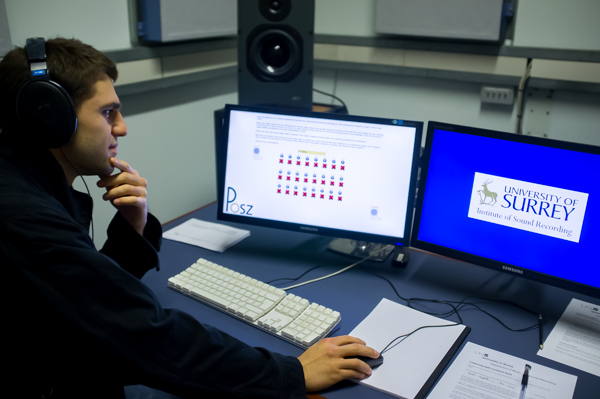

We recently hosted the 52nd International Audio Engineering Society conference at Surrey on the topic of Sound Field Control. One of the main aims of the conference was to bring together engineers and psychoacousticians to work together on this application area: this is something that has arisen from our research on Perceptually Optimised Sound Zones (POSZ - you can find out more at www.posz.org).

There has been much work done on the engineering side of sound field control. This includes the development of Wave Field Synthesis and creation of multiple separated zones of sound in a single acoustic space. The results of this research are often in terms of a measurement of instantaneous pressure across an area, or of differences in SPL between a target zone and an interference zone. Conclusions are often of the ilk: "this system increases the contrast from 12 dB to 15dB".

We presumably want to use this technology for people to listen to things at some point, so what does the above conclusion tell you about whether it's any good? Yup, not really very much.

How good is it really?This is where the collaboration with psychoacoustics is important. Unless we listen to these systems, we won't know how good they are, or even how best to improve them. This could be as simple as the engineer critically listening to what he's created, but it could be so much more: if we can predict what listeners will think of a system, then we can use this to evaluate completed systems, to help identify the areas for improvement that will have the greatest subjective effect, or even automatically tune the system as it is used.

One of the highlights of the conference for me was a workshop that explored these factors. Among others, Filippo Fazi from the University of Southampton expressed a wish for a prediction tool to aid his work. We are working on such prediction models for sound zones, and two of our PhD students presented work in this area.

How good are our predictions?

How good are our predictions?

At the moment our prediction models for sound zones are at an early stage. Initial results are encouraging, but there is still much to do. We need to be careful about how we use these though: incomplete psychoacoustic prediction models can have significant problems. Two of which are worth going into detail about here.

Firstly, until we've managed to model every aspect of a listener's perception, we can't be confident that the resulting models will work in all situations and for all stimuli. Usually when we develop these models we do so for a specific purpose or a specific set of stimuli. You can look at an ITU-standardised model such as PEAQ as an example. This has been created for predicting the quality of low-bit-rate monophonic codecs. However, it is all-too-often used for purposes for which it was never intended, such as evaluating analogue-to-digital converters, impulse response regularisation, musical instrument synthesis, and audio mastering. When creating and using subjective models, we need to be careful not to expect too much from them, and to only use them as intended. They're a long way from being all-encompassing, and we need to respect that.

Secondly, we have a danger of measuring what we know we can measure, and not necessarily what is most important. You can see evidence of this in Government performance analysis of schools and hospitals, and, dare I say it, University league tables. You can fairly easily derive a metric based on measuring what is easy to measure, but is it truly representative of the quality of the item? For instance, are GCSE results really representative of whether a school is good or not? Firstly it's unfair as it doesn't take into account the resources or the type of students that a school has. Secondly, it doesn't take into account the ethos, the pastoral care, the extra-curricula activities, and many other aspects. However, GCSE results are easy to measure, so they end up with being used as the main metric of importance, perhaps to the exclusion of all others.

The other problem with incomplete measures of quality is that inevitably people end up working to improve the measured results and can either miss the bigger picture or miss other attributes that might be of more importance to the end user. Again to use the example of Government quality metrics, tracking waiting times resulted in these figures being dramatically improved, though was this perhaps at the expense of other factors such as hospital cleanliness and infection rates?

So what's the conclusion?Metrics that predict the perceived quality of something can be a very useful tool for developing audio systems, such as those for sound field control. Using such predictions, if they're effective, can save many hours of prototyping and testing. If we implement them cleverly, we can even use them to automatically tune a system whilst it's in use. That could help make a significant improvement to audio quality.

However, we need to develop the prediction models carefully, treat them with caution, and only apply them to the applications for which they were developed. And it's probably worth backing up the measurements with listening tests too - just to make sure.

At least, until we've built a complete human simulator.

by Russell Mason