Intelligent Hearables with Environment-Aware Rendering (InHEAR)

Research Student: Milap Rane (IoSR)

Research Student: Andrés Estrella Terneux (CVSSP)

Research Student: Adèle Simon (Bang & Olfusen)

Supervisor: Dr Philip Coleman

Supervisor: Dr Philip Jackson

Supervisor: Dr Russell Mason

Industrial Partner & Funding: Bang & Olufsen

Additional Funding from: University of Surrey Doctoral College

Start date: 2020

End date: 2023

Project Outline

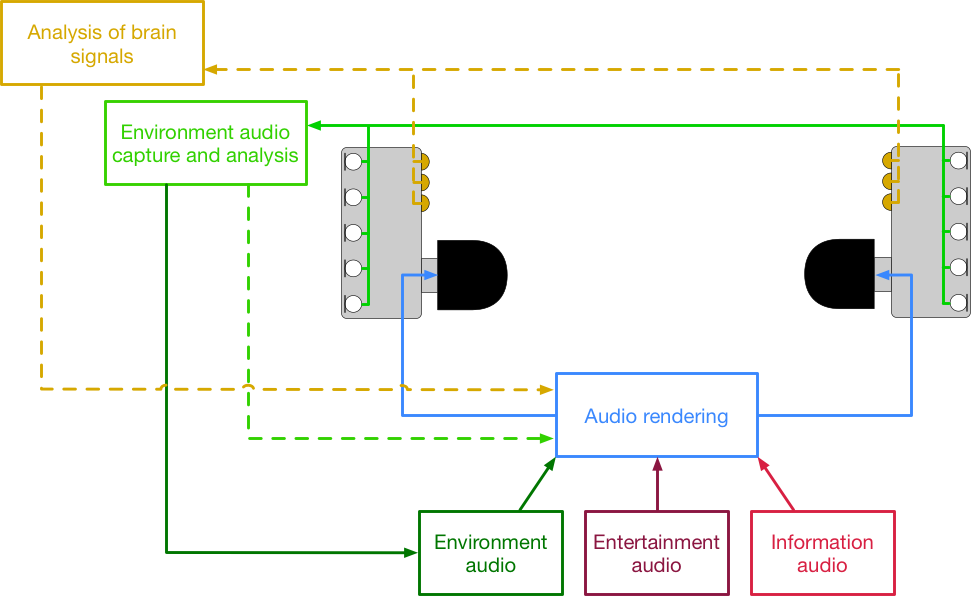

"Hearables"—in-ear headphones with built-in microphones and signal processing capability—offer many new opportunities to reproduce sound that adapts and responds to your preferences, your activities, and what's happening around you. For example, they can change the properties of what you're listening to if it's a noisy train compared to a quiet library, or reproduce a telephone conversation so that the talker sounds as if they're coming from a position that is as clear and intelligible as possible.

The InHEAR project will develop new algorithms to maximise the listener's experience regardless of their listening context. The project brings together three doctoral researchers spanning disciplines including sound analysis, perceptually optimised audio rendering and neuroscience.

Audio rendering

Milap Rane, (IoSR) will focus on the audio rendering and evaluation of the user experience. A musician at heart, and a technologist by education, Milap stumbled into Signal Processing as a student of Electronics and Telecommunications Engineering. He got to understand the Theories of Signal Processing in class, and apply it real-time via plug-ins on his freelance Music Production projects. He was fascinated, and it made him realise that the synergy of music and technology is the field he wanted to work in. With this in mind, he proceeded to get a Master's in Music Technology from Georgia Tech. This led to a journey where he developed interesting products, like Popsical Remix - the world's smallest karaoke machine, and BeneTalk, a wearable device that helps people with a stammer improve their speech. He is also a performing Indian classical musician, who has performed at various locations around the world. His PhD is supervised by Dr Philip Coleman and Dr Russell Mason, and is supported by Bang & Olufsen and Surrey's Doctoral College.

The PhD work will entail understanding various rendering methods, like the ones based on sound-field based analysis, amplitude panning strategies, and binaural techniques to determine which can be used in a wearable and can lead to the best spatial sound experience in headphones. This will also involve designing test scenarios to evaluate these algorithms, and/or designing adjustments or improvements to existing algorithms. It could also involve determining how to facilitate better conversion of metadata (especially EEG and attention-based data) to enhance the experience of listening using earphones.

Environment audio capture and analysis

Andrés Estrella Terneux (CVSSP) will focus on the analysis of the listening environment, feeding information into the audio rendering. He received his MSc. in Electrical engineering from Blekinge Institute of Technology, during which he created an algorithm to detect the location of low-flying aircraft using an audio-video combination with the novel Microflown. Recently, he applied a likelihood-ratio audio detector using a Gaussian mixture model classification to study legacy audio of frog communities calling in the Ecuadorian Amazonia as part of a multidisciplinary team. He also creates digital art based on soundscapes and ecoacoustics. His favourite place to do research is near a National Park. His PhD is supervised by Dr Philip Jackson and Dr Philip Coleman, and is supported by Bang & Olufsen and Surrey's Doctoral College.

The PhD work will entail the analysis of binaural audio from hearables' built-in microphones to capture and objectify environmental sound events that are relevant in context-aware object-based audio rendering to enhance the listening experience using earphones. Suitable statistical audio signal processing algorithms will be explored for binaural sound source separation, localization and/or classification using the internal processing unit of the wearable and to generate audio object metadata. This will also involve the design of relevant experimental scenarios to evaluate the performance of existing algorithms, and/or designing adjustments or improvements.

Analysis of brain signals

Adèle Simon (B&O) will investigate how brain signals, e.g. from EEG, can be used to track auditory attention and the links between auditory attention and perceived sound quality. She holds an M.Sc in acoustic, signal processing, and computer science for music, from Ircam/University Pierre & Marie Curie and an M.A in music psychology and neurosciences from the University of Jyväskylä, where she explored the perception of binaural audio through physiological signals. Her PhD is supervised by Dr Søren Bech (B&O), Dr Jan Østergaard (Aalborg University), and Dr Gérard Loquet (Aalborg University) and is supported by Bang & Olufsen and the Danish Innovation Fund.

The PhD work will entail measuring brain signal during ecological scenarios of music listening in noise, and exploring various methods for EEG processing, in order to develop ways to track and follow the attention of wearables' users based on their cortical activity. It will also involve the exploration of the links between auditory attention and perceived sound quality, to determine how brain-measured attention can be used as an input to enhance the experience of listening with earphones.

Publications

- Simon, A., Bech, S., Loquet, G., & Østergaard, J. (2022, July). Electrodes selection for cortical auditory attention decoding with EEG during speech and music listening. In 25th International Conference on Information Fusion (FUSION) (pp. 01-06), Linköping, Sweden. IEEE.

- Simon, A. M. D., Østergaard, J., Bech, S., & Loquet, G. S. J. M. (2022, June). Optimal time lags for linear cortical auditory attention detection: differences between speech and music listening. In 19th International Symposium on Hearing, Lyon, France.

- Rane, M., Coleman, P., Mason, R., & Bech, S. (2022, May). Survey of User Perspectives on Headphone Technology. In 151st Audio Engineering Society Convention, Online, Paper No. 10556. doi.org/10.15126/900449